For anyone who has delivered a lecture or workshop, the feeling may be familiar: Are participants listening? Will they put all the materials I spent hours crafting in a filing cabinet or will they actually use them?

Driven by a strong belief in capacity building, BIT has delivered thousands of workshops over the past decade — from routine training of civil servants in central and municipal governments to custom programs, like the partnership with Harvard University’s Executive Education Program to train Nigerian civil servants, among many others.

In early 2019, my colleague Stewart Kettle and I were invited by the AGESIC Lab of Uruguay to deliver a hands-on workshop on our approach to running behavioural insights projects. The program was fairly standard for us; what made this workshop special was the attendees, representing a number of public policy labs from different Latin American governments and bringing a wealth of experience in policy design and implementation. Our human-centered approach was therefore not unfamiliar to them, but they came eager to learn how a focus on behaviour and evaluation could complement their existing work.

Despite our excitement of working with such an experienced group, I couldn’t help but wonder – once again – if the lessons and materials we had so carefully prepared would make it all the way from the classroom to the real world of policy. After all, we have all studied intention-action gaps. Until I reconnected with one workshop participant, Jonatan Ol Beun. His story shows how capacity building can lead to socially impactful work. This is why we invited Jonatan to share insights from his experience on our blog, hoping we can all learn from his practitioner’s perspective. Thanks again, Jonatan!

Taking leanings from the classroom to the real world

As someone who is passionate about behavioral science, learning from the BIT is to me the equivalent of a kid going to Disneyworld. A year ago, Disneyworld became true for me. Thanks to the AGESIC Lab’s invitation and the support of the LABgobar (the Government Lab of Argentina), I had the opportunity to participate in a workshop, where I learned about BIT’s methodologies and best practices. Alexandra and Stewart combined the theory with a hands-on approach and backed it up with real cases that they had previously worked on. This did not only equip me with concrete tools but also left me eager to apply behavioural insights in my job.

I went back to Argentina inspired to apply what I had learnt in a joint project between Argentina’s LABgobar and SENASA (the National Food Safety and Quality Service) to reduce bovine tuberculosis. While it may not be the first policy challenge that comes to mind for behavioural scientists, it generates a USD 7 million annual loss for Argentinian farmers, forces the country to throw away 19,000 kilos of meat every day and could have detrimental public health implications if not well managed. Here is how the team and I applied behavioural science to tackle this challenging problem.

As I learned, the BIT uses the TESTS framework – Target, Explore, Solution, Trial and Scale – to deliver behavioural science projects, from diagnosis to implementation. Let’s take it one step at a time.

TARGET

The first step of the TESTS methodology involved defining the behaviours we aimed to change and thinking about how we were going to measure the effectiveness of our intervention.

For us, it was clear that the objective was to decrease the rate of bovine tuberculosis. However, we weren’t sure about the specific behaviours we needed to change to achieve this. Therefore, we first mapped the key stakeholders and tried to understand the decision-making process of farmers and veterinarians. We identified potential behaviours that we wanted to tackle and built hypotheses about the underlying causes that triggered those behaviours. In short, farmers needed to hire veterinarians that would diagnose their cows and check if there were any positive in bovine tuberculosis. If a cow tested positive, it had to be eliminated. If all cows were negative, the veterinarian would issue a certificate verifying that the herd was free of bovine tuberculosis.

EXPLORE

As John Le Carré puts it: “a desk is a dangerous place from which to view the world”. The second step of the methodology focuses on gaining a deep understanding of the users’ and policymakers’ perspectives through a social anthropology approach. This can involve in-depth interviews and observing the behaviour of stakeholders.

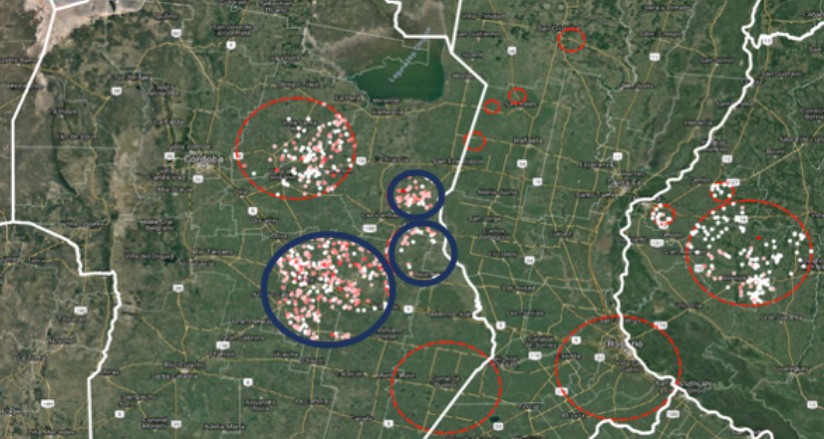

Since agricultural activities take place across almost the entire country, we used a heat map to identify the regions with outbreaks and decide which places would be the most effective to conduct insightful interviews.

*map provided by SENASA with geolocation of farmers according to their status with bovine tuberculosis

During the field research, we realized that veterinarians shared a general feeling that their government was not in control, so issuing false certificates had become a common practice among ‘fixers’. This undermined the credibility of certificates. As one user told us:

I wanted to scale my production and bought 15 cows from someone who showed me the certificate. Suddenly, I went from being free of the disease to almost 30% infection rate among my cattle.”

*interviewing one private veterinarian at a farm owned by one of his clients

SOLUTION

The third step of TESTS is to design potential solutions that might nudge users to change their behaviour. Frameworks like EAST and MINDSPACE can be of great use to guide the idea generation phase.

Our fieldwork shed light on the most important behaviour to address: veterinarians fixing the game. We noticed among veterinarians a low perception that they would get caught for wrongdoing and potentially some optimism bias, which led them to believe they wouldn’t face consequences for breaking the law. This also generated a negative social norm, where issuing fake certificates was normalized.

Moreover, veterinarians played a key role in advising farmers. Therefore, changing their behaviour and credibility meant they could become key messengers to inform farmers of the severity of the problem, generating a multiplier effect.

Leveraging these insights, we designed two different emails. Email “A” had a formal and severe tone. It highlighted that there were going to be stricter controls on veterinarians’ activities. This “enforcement email” was aimed to offset the feeling of limited government oversight of veterinarians. For email “B” we used an eye-catching picture and phrase to make the message more salient.

TRIAL

Testing solutions through rigorous methods like randomised controlled trials is an essential step of the methodology. It is an effective way to measure the impact of behaviorally-informed strategies and it gives us insightful feedback about the effects of the intervention before scaling it.

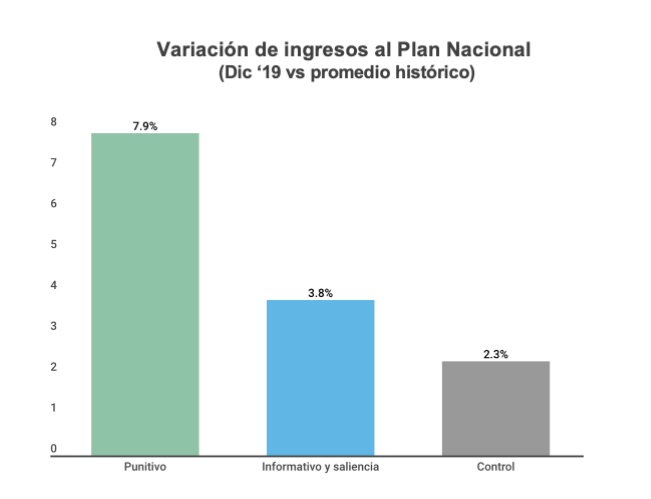

To test the effectiveness of our emails, we ran a pilot in two regions. We divided veterinarians in these regions at random into two groups (201 in each). We then compared their behaviour during the same period against the track record of a comparison group (the rest of the country), who did not receive an email. One month after sending the emails, we observed a 5.6% net increase in farmers and veterinarians reporting testing activities and eliminating infected cows who received the enforcement email (“A”) and a 1.5% net increase in farmers and veterinarians who received the salience email (“B”).

CLOSING REMARKS

Reading about a completed project that luckily had positive results might give you the feeling that the process was straightforward and simple. But to be honest, it was messy and full of bumps. If you are trying to apply behavioural science to public policy, here are some lessons I learned that might smooth your experience.

- Not being an expert in a topic might make you feel insecure. However, that same lack of knowledge can help you have an unbiased view on the challenge and makes it easier to transform untested assumptions into testable hypotheses.

- Having quantitative data to analyze problems is important and very attractive but not complementing it with a social anthropology approach can give a limited view of the problem. Behind numbers, there are people making decisions and stories full of insights. To capture those insights, go out and do field research!

- EAST is an accessible tool for intervention design as well as capability-building. Having a common framework allows for a collaborative approach during the project, which is essential to build team trust and make the process smoother.

- Doing strict RCTs is not always possible. You need a lot of data (and a partner willing to share it with you). However, some results are always better than no results at all. In our case, even though we couldn’t run a strict statistical analysis, we found some quantitative results by comparing the number of farmers complying with the bovine tuberculosis law before and after our intervention and isolating those groups from the control group. The fact that this is consistent with findings from our thorough field research and that SENASA reported noticing a change in veterinarians’ behaviour gives us confidence that our campaign produced a real impact.

I know that working on a behavioural science project might seem scary. I have also gone through the blank page syndrome and had spent too much waiting for the “right time” to do it. If you feel familiar with this feeling and situation, you are not alone. There is an incredibly generous international community of behavioural scientists that will be happy to help and encourage you as they did with me. I hope my short experience serves to encourage you as well.

It would be unfair to wrap this article without acknowledging all the people who worked in the project or contributed to make this happen. Special thanks to Rudi Borrmann, Ricardo Negri, Matias Nardello, Malena Temerlin, Tomas Dominguez Vidal, Pablo Fernández Vallejo, Lucas Gamarnik and Francisco Munilla.